Stop by the BLUEfin Booth #2411 to browse a comprehensive collection of products.īrowse a vivid and extensive collection of Gundam Plastic Model kit (GUNPLA) from BANDAI SPIRITS Hobby. Be sure to use #BluefinBrands when you post a pic on social media for a chance to win one of BLUEfin’s exclusive Anime Expo prizes. Also, it’s first time for us to display a prototype sample of new 1/100 GUNDAM BARBATOS model kit. Entries from the GUNPLA BUILDERS WORLD CUP (GBWC) will also be on display, with winner announcement at a separate panel on Saturday July 6th at 8:30pm (Room LP4 411) with famous modeler from BANDAI SPIRITS Hobby, Katsumi “Meijin” Kawaguchi. Other notable attractions include FREEgiveaways of a Gundam Visor sponsored by GUNDAM.INFO and small Gundam Shokugan items, and also a workshop area where Anime Expo attendees can build a FREEGundam Plastic Model kit (GUNPLA) and try out the new Gundam Marker Airbrush. It will highlight upcoming and existing Gundam products from renowned BANDAI divisions including BANDAI SPIRITS Hobby (GUNPLA), Tamashii Nations (Action figures), Shokugan (Small toys), Gashapon (Capsule and small toys), BANDAI Apparel and BANDAI NAMCO Entertainment (Mobile game/ Console game).Ī massive 9-foot-tall RX-78-2 Gundam statue will be stationed inside the Gundam Booth for photo opportunities. Located on the Main Show Hall, the “Gundam 40th Beyond” Booth (#2611), will be a key presence this year and will celebrate the 40th anniversary of the launch of the original Mobile Suit Gundam anime series. This year BLUEfin and other BANDAI NAMCO Group companies will showcase an interactive Gundam Booth.

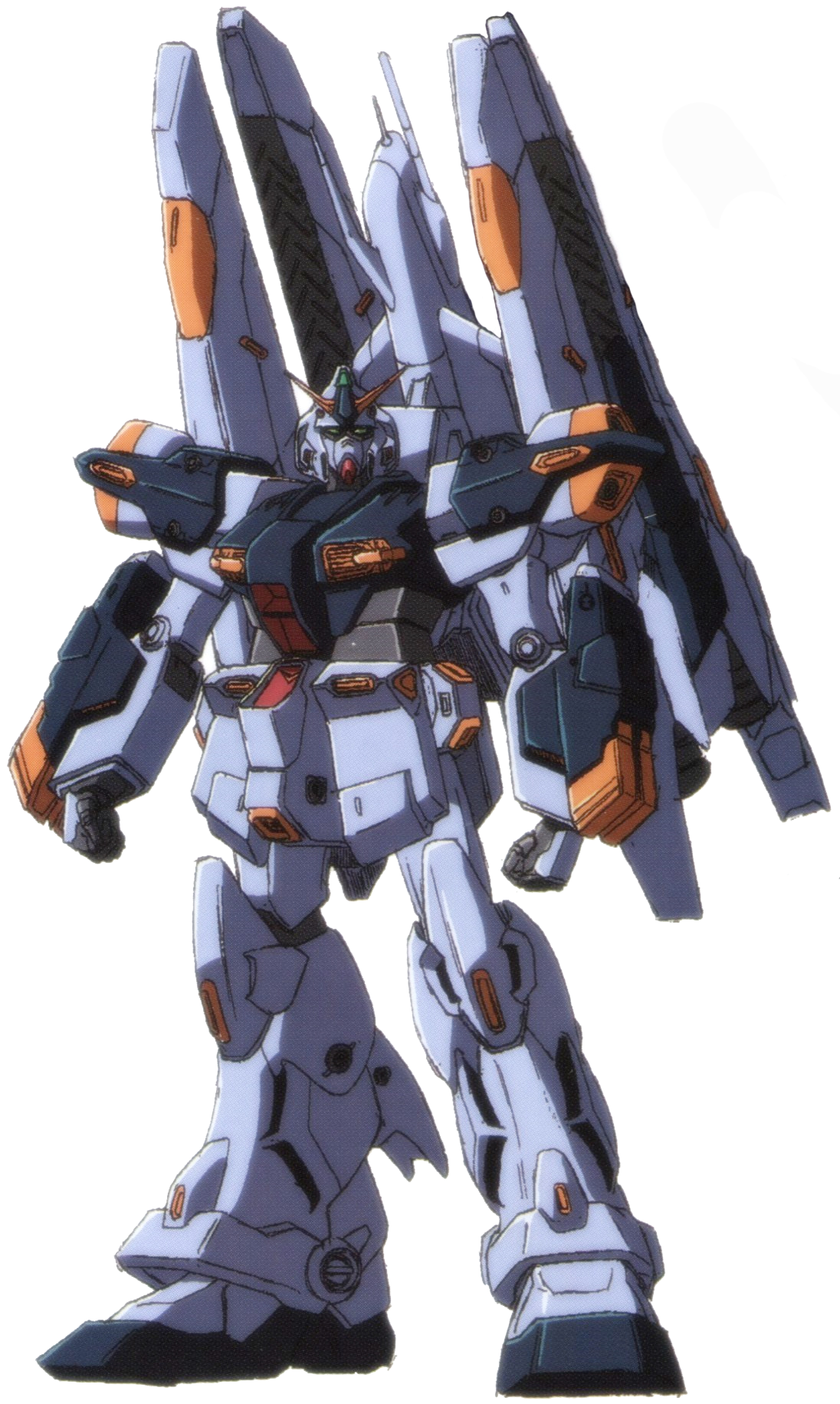

GUNDAM NT REGAL SALISBURY SERIES

A particular type of model called Word2Vec uses the embedding layer to find vector representations of words that contain semantic meaning.Leading Collectibles Distributor Marks 40 th Anniversary Of Mobile Suit Gundam Series With An Interactive Booth, GUNPLA Workshops, Panels And A Wide Array Of Collectibles Available For PurchaseĪnaheim, CA, June 27 th 2019 – BANDAI NAMCO Collectibles (BLUEfin), a BANDAI NAMCO Group company and the leading North American distributor of toys, collectibles, and hobby merchandise from Japan and Asia, details its exhibit plans and retail exclusives for Anime Expo 2019. You can use them for any model where you have a massive number of classes. The lookup table is trained just like any weight matrix as well.Įmbeddings aren't only used for words of course. The lookup is just a shortcut for the matrix multiplication.

The embedding layer is just a hidden layer. The embedding lookup table is just a weight matrix. This process is called an embedding lookup and the number of hidden units is the embedding dimension. Then to get hidden layer values for "heart", you just take the 958th row of the embedding matrix. We encode the words as integers, for example "heart" is encoded as 958, "mind" as 18094. Instead of doing the matrix multiplication, we use the weight matrix as a lookup table. We can do this because the multiplication of a one-hot encoded vector with a matrix returns the row of the matrix corresponding the index of the "on" input unit.

We skip the multiplication into the embedding layer by instead directly grabbing the hidden layer values from the weight matrix. We call this layer the embedding layer and the weights are embedding weights. Embeddings are just a fully connected layer like you've seen before. To solve this problem and greatly increase the efficiency of our networks, we use what are called embeddings. The matrix multiplication going into the first hidden layer will have almost all of the resulting values be zero. Trying to one-hot encode these words is massively inefficient, you'll have one element set to 1 and the other 50,000 set to 0. When you're dealing with words in text, you end up with tens of thousands of classes to predict, one for each word.

An implementation of word2vec from Thushan Ganegedara.NIPS paper with improvements for word2vec also from Mikolov et al.First word2vec paper from Mikolov et al.A really good conceptual overview of word2vec from Chris McCormick.I suggest reading these either beforehand or while you're working on this material. Here are the resources I used to build this notebook. This will come in handy when dealing with things like machine translation. By implementing this, you'll learn about embedding words for use in natural language processing. In this notebook, I'll lead you through using TensorFlow to implement the word2vec algorithm using the skip-gram architecture.